Analysing the volatility of financed emissions

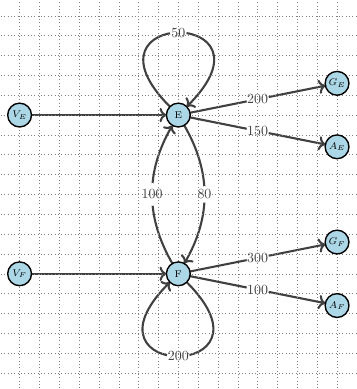

In this post we analyse the variability profile of reported financed emissions of financial institutions such as banks. Using a conceptual deed-dive into the calculation procedure we isolate the more fundamental factors that contribute to variability.

In this post we analyse the variability profile of reported financed emissions of financial institutions such as banks. The discussion will concern primarily loan portfolios but similar considerations apply to other capital instruments. Our context are the so-called Scope 3 - Category 15 emissions under the terminology of the GHG Protocol, namely the emissions of the companies being financed by the loan portfolio.