Machine learning approaches to synthetic credit data

The challenge with historical credit data

Historical credit data are vital for a host of credit portfolio management activities: Starting with assessment of the performance of different types of credits and all the way to the construction of sophisticated credit risk models. Such is the importance of data inputs that for risk models impacting significant decision-making / external reporting there are even prescribed minimum requirements for the type and quality of necessary historical credit data.

Securing adequate Credit Data can pose significant challenges for a host of possible reasons. Just to name a few possibilities:

- The intrinsic paucity of credit events: There might be a genuine shortage of data because the number of obligors in the observation window is small. An extreme example is the case of Sovereign Risk with only a few hundred countries to observe. This scarcity might be further coupled with higher credit quality (e.g large blue chip corporations), which means there are relatively few credit events overall

- The sensitivity of client related data: While in many cases properly filtered and anonymized data sets can make quite difficult the identification of any concrete entity, the extra work required to achieve this might dissuade publication

- A general reluctance to release information: Even when datasets are no longer commercially sensitive (e.g older portfolios) there might be a reluctance to reveal any internal information (see also the previous point on the extra work required)

- The quality of the existing data: Even if none of the above constraints applies, the collection, processing and availability of historical credit data may suffer from data quality problems which (in extremis) would defy the purpose of publication

The promise of synthetic credit data

This is the first blog post of a series dedicated to the discussion of synthetic credit data.

While it is impossible to generate information out of no-information, creating synthetic data using modern machine learning approaches helps minimize the impact of limited historical data (or, equivalently, maximize the benefits of existing information). This can be achieved by using suitably generated data for building, priming, testing, validating user training etc, the systems, models and processes that are used in credit decision making

In this first post we will discuss the definition of synthetic credit data, their advantages and disadvantages, and first examples in the context of machine learning approaches to credit risk analysis

What are synthetic credit data?

Synthetic Credit Data are computer generated credit data (e.g., produced using generative machine learning algorithms) that:

- Refer to the same client / product characteristics tracked by a production system

- Adopt the same credit lifecycle typology (possible events and measured elements)

- Conform to the actual schema (data template) that is being emulated

- Have the same or similar statistical properties as the credit data set that is being emulated

The possibilities offered by synthetic credit data

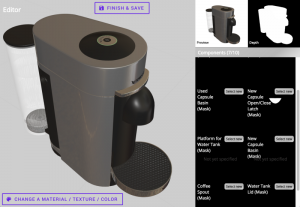

The use of synthetic data has become increasingly popular in many areas of machine learning that use classification algorithms (also called supervised learning). A common issue that is being tackled this way is addressing the (non-) availability of labelled data, namely data that include both the characteristics (attributes) of an entity (e.g an image of a coffee machine) and the label (identification as a ‘coffee machine’).

In the optical recognition example the synthetic data problem can be approached by generating programmatically (e.g using a 3D rendering system) graphical representations of coffee machines (among other objects), thus producing data with known characteristics. An optical recognition system can thus be trained on such data, before being fine-tuned using the more scarce (and expensive to procure) real labelled data (images of coffee machines that have been recognized / identified by humans).

Synthetic credit data generation aims to overcome some of the challenges of inadequate historical credit data with the production of high-quality, controlled and fully understood data samples.

The essence of the method is to employ a collection of consistent generative models that create synthetic snapshots of the initial and future states of a desired credit portfolio. The data sets thus generated can then be treated as historical data sets, allowing for a variety of uses.

The generative models can be trained on existing real data but also tuned with the use of expert insights to create data sets that closely resemble the expected properties of actual data. With sufficient effort synthetic credit data can be made indistinguishable from any available real data (in a statistical sense) as the generative algorithms can be made to essentially include all available information.

Synthetic Credit Data generation can be thought as a case of large scale missing data imputation. The difference with usual approaches to data imputation is the different tolerance to possible bias and distributional assumptions. In missing data imputation a great deal of thought must be dedicated to avoiding the introduction of biases. In contrast, in the case of synthetic data, the selection and use of the underlying generative models is by definition opinionated and expert based. This is an most important aspect to keep in mind when as it determines the acceptable use of the generated datasets.

How is it done?

There are many useful variations but in a fully simulated lifecycle the basic steps involved in synthetic credit data creation would be captured in the following list:

- Generation of a sufficiently detailed credit system (collection of borrowers and lenders), including at a minimum:

- Generation of Borrower characteristics (both static details and initial values for dynamic aspects)

- Generation of Credit Product details (e.g., conforming to Loan Product Termsheets and Templates)

- Simulation of the credit/economic system (including possibly variables such as broad macroeconomic and sectoral factors

- Calculation of simulated internal credit ratings or other ex-ante credit state indicators (e.g credit scores) for all the desired snapshots

- Calculation of the individual debtor response (e.g realization of any credit events) over the desired period of time

- Compilation and distribution of synthetic credit data snapshots in the required data formats

Depending on the use case, various subsets of the above workflow may be adequate. Further, each of the above steps admits a large number of variations.

Generative Models

Lets turn next to the nature of the generative models that can be used. There are two broad types of algorithms:

- Monte Carlo Simulation: Creating samples by drawing from a postulated (multi-dimensional) distribution

- Agent-based modeling: A more elaborate simulation framework that aims to mimic closer the actual credit system (at the expense of significantly higher complexity / assumptions)

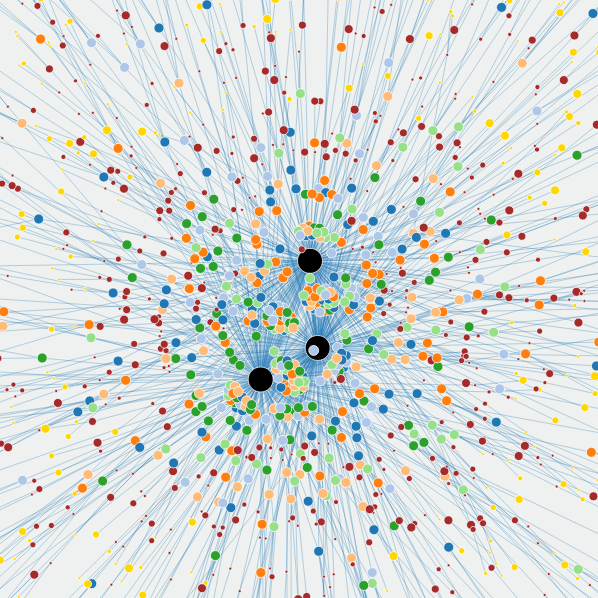

Mathematically we can represent the relationship of generative models with credit risk classification systems as follows. A credit risk assessment model is a mapping $f\colon X \to Y$ of observed measures (denoted also as covariates, characteristics) X to a credit outcome Y, expressed as the conditional probability $P(Y|X)$.

In contrast, a generative model involves the joint modelling of both observables and outcomes $P(X, Y)$

Given the generative model, conditioning on X to obtain simulated outcomes $P(Y|X)$ is (conceptually) simple. In addition, it opens up the possibility to calculate $P(X|Y)$, namely the realizations of observed variables X consistent with a set of outcomes Y, which is known in risk management context as reverse stress testing.

Advantages

The following list enumerates a number of concrete advantages in using synthetic credit data

Testing and Training of Production Systems

Synthetic data may be generated to meet specific criteria that are not found in the actual historical data sets but may be expected to present in production environments. The abundance of synthetic data means that the relevant IT systems can undergo more comprehensive design and testing. It also provides flexible means to train personnel to use the systems in a realistic context similar to what they will encounter in production.

Stress Testing of Risk Frameworks

The flexibility inherent in the specification of synthetic credit data can be useful for simulate not yet encountered conditions: Where real data does not exist, synthetic data is the only solution. This approach helps to better take into account rare unexpected scenarios.

As mentioned above the intrinsic potential to perform reverse stress testing can be considered as a bonus that comes with the adoption of a full generative model.

Use in Model Validation

An existing risk model that has been developed on the basis of actual data can always be studied more extensively using synthetic data. This is particularly true in edge cases, where the sufficiency of the actual data sets is borderline. Some concrete examples among the variety of options:

- Help test the sensitivity of algorithms to common statistical problems (sample size, missing data).

- Provide a simulation environment to test Reject Inference methodologies

Use in New Business or Product Approval

A typical circumstance where real data (almost by definition) is completely absent is when a new business initiative is launched or a new credit product is released. In this case the judicious use of generative models can help prime the entire pipeline of tools and models associated with a new product release, thereby significantly reducing the associated risks.

Broader benefits

It is worth adding to the specific list above some more high level, but not less important benefits of readily available synthetic datasets: It liberates quantitative / data science teams to develop in-depth understanding of the overall systems and algorithms at their disposal, rather than being conditioned by the (typically quite real) limitations of available datasets. In essence it enables data science teams to fulfill better the science part of their role: Enabling for example gedanken experiments and exploratory studies with minimal time, cost and project risk.

Finally, synthetic data generation, while it obviously does not eliminate the differential access to information between organizations of different size or other resources, it works towards leveling the playing field by enhancing the ability of smaller organization to build and acquire confidence in the tools underpinning credit portfolio management

Limitations

There are obvious challenges to generate faithful synthetic data for complex credit systems and behaviors. The generative models creating the synthetic data must be estimated and validated on the basis of factual information about the system being modelled and the very motivation for the exercise is the relative absence of data!

The key to unlocking the potential of synthetic credit data is to understand the impact of their limitations. This impact is a function of the nature of the system being modelled (its complexity), the amount and quality of available real data and the appropriateness of any modelling assumptions (the existing expertise and know-how).

- Synthetic Credit Data are clearly not a substitute to real data which means that in particular for regulatory models their use must be limited to the auxiliary roles mentioned above. Eventual production models must always be supported by the available factual evidence. Yet if used properly, synthetic data can only strengthen the corresponding model development pipelines

- Generating synthetic credit data is an important process in its own right and may inadvertently introduce issues ( hence costs and risks). It is therefore important that it becomes a recognized element of the model lifecycle rather than be a hidden and ad-hoc tool in model development.

- The quality of the outcomes will reflect the quality of the generative models (including their biases) which means that not all synthetic data will be useful for all purposes. Proper identification and mapping of fitness-for-purpose of various procedures and algorithms is, thus, recommended.

Further Reading / Next Steps

We are rolling out a synthetic credit data API on a trial basis at the demo OpenCPM site where instructions on generating and downloading a variety of synthetic credit data are provided. If you are interested to use this service ( or simply to learn more about synthetic credit data) we encourage you to contact us

Comment

If you want to comment on this post you can do so on Reddit or alternatively at the Open Risk Commons. Please note that you will need a Reddit or Open Risk Commons account respectively to be able to comment!